I moved into my current house four years ago today!

This is my first house but not the first time I've lived away from my parents house as that was in 2013 when I moved into my halls of residence at university.

It was strangely easy buying my house back in 2021 as I had saved enough money up myself (I was saving an awesome £1200 - £1600 a month at the time and had been for the last 4 years) and I got a slight discount of 5% for being a teacher so I had the deposit I needed. Buying the house took place in August 2020 where I put down my deposit for the house to be ready for April 2021. I remember it kept moving between April and June but eventually towards the end it ended up being February.

Living here has been so much nicer than living at home (obviously) and I've done a lot of stuff to the house since moving here including running a network around the house, I've had my garden done beautifully (thanks to mum), getting my loft boarded (I couldn't live without this as I'd have nowhere to store stuff), I've installed my own smart home with Home Assistant and I've now got my fabulous CleverCloset understairs storage.

I'm always happy to come home to this place and whilst I have plans to move to a different life style in the next few years, I will continue to enjoy living here.

Today marks a special day. Today, four years ago, I got the keys to my first house! It was a wonderful day until I was told I couldn't move in because the mortgage provider hadn't released the funds on time, so I had to wait over the weekend until Monday.

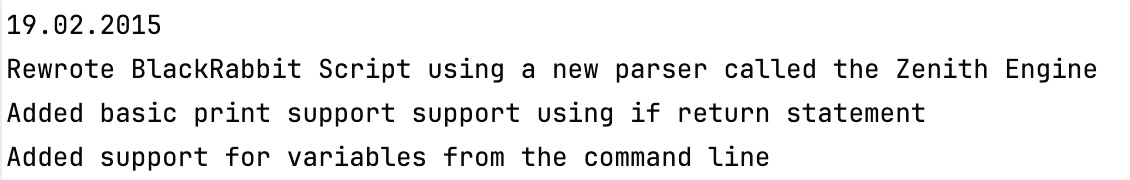

However, perhaps more significantly, ZPE 1.2 was released on this day ten years ago. 1.2 was the most significant change to my programming language and environment ever and was the first version of ZPE that replaced BlackRabbit 1.1. BlackRabbit was the precursor to ZPE, but the performance was never on par. It was named after Petro, my adorable and wonderful little black rabbit.

After a weekend of looking at my whiteboard, this is what I've come up with.

I'm pleased to announce that after nearly ten years of using hash maps in ZPE, I've switched to using my new binary search tree implementation. This improves performance further than before since in some cases my hash map, which is some 8 years old, would lead to collisions that in turn presented a O(n) case. This was not good.

The worst case for a binary search tree is indeed O(n) also and it's best case is actually worse than that of a hash map, which is O(1), but I've been testing out a new binary search tree I've developed and it performs marginally better. What's really cool about the binary search tree implementation is that it is actually a drop in replacement for the hash map I've been using for the last few years with methods like put, get and so on.

Throughout the next few weeks I'll continue to assess this.

It's sad that I have to move from Disqus after moving back to it only a few years ago, but Disqus decided to force ads onto the free tier when a website becomes busy enough. Because I'm now actively getting 6,000 to 8,000 visits a day, Disqus now force ads on to my website, which I think is totally unacceptable and not part of the original model of this website. As a result, I'm building my own comment system again.

Please continue to use Disqus in the mean time.

BalfML, my own markup language, is now available to view on my GitHub as a formal specification.

Whilst the specification is clear and provides some clarity as to what the language context will look like, it's is far from finished. There are plans to update the formal specification and indeed the markup as I begin to develop Java, Python and C# libraries that will parse the data to the correct format.

The formal specification of BalfML is similar to that of the specificationless INI format and the TOML markup language. However, BalfML has a few additional data types and features.

I will soon build this in Java as well as for ZPE.

I also intend on using this as a configuration format for ZPE soon enough.

The latest version of ZPE is here. And it's met the original target set out. This might be a small update in terms of features, but the features are pretty big.

First of all, we've got the new ChatGPT model. This model allows for direct communication with ChatGPT using an API key. The setup is simple and is done within the properties file. It can be used to assist in the editor as well as can be using as an object in code.

Second, we've got two new parsers; TOML and INI. Both formats are similar but offer advantages. I like TOML as a format and have considered using it as the main form of property storage in ZPE. INI is simple and basic and less likely to find use with me but it's great to finally have two new data formats that can be decoded by ZPE.

ZPE 1.13.2 will be released very soon and I'm also planning for two releases in February. The first new features that will come to ZPE are the encoders for both TOML and INI files. I also want to release an XML encoder by the end of February as well.

ZPE has increased in file size however, and this is due to the inclusion of ChatGPT. It's worth it though.

Over the next few months I want to add more to ZenPy to make a more complete representation of Python from YASS.

As well as that, I intend on developing ZenLua and ZenPHP. Both of these are in early stages and will be fully open source and available on my GitHub.

ZenLua will transpile YASS to Lua. This is something I've been thinking about for a while now as I use Lua quite a bit. My plan is to make it very easy for myself to write Lua with a language I think is considerably easier to understand.

I made some New Year's Resolutions and plans I want to try and stick to this year.

First of all, welcome to 2025! This is my first 'life' post of the year on my blog. I wish you a happy new year. At the end of 2024 I sat and had a little think about what's really important to me right now. I made a couple of decisions on what needs to change. Let's start with the basics.

Social media

x

While my website states that one of the people who inspired me the most was Elon Musk, I now wholeheartedly dislike what he stands for and have decided that I don't want anything to do with him or his platform. It's not political; I just don't like him anymore. This means starting right now; I'm beginning to move from x.com to Bluesky (https://bsky.app/profile/jamiebalfour04.bsky.social), which I am hoping many others will do too, so that it can become the number one platform.

Second, WhatsApp. I rely heavily on WhatsApp, but for the last few months, I have considered moving away from WhatsApp and, generally, Meta. It came up in the staff room the other day, and it reminded me that I was thinking about this a few months back, so I added it to my plans yesterday. I want to move to Signal, a more open, privacy-centred chat platform. The problem is trying to convince others to follow suit.

Facebook is slightly more complex than the two previous ones. I've found some social networks like MeWe, which offer a similar experience to Facebook, but I'm not entirely sure about this yet. This is probably the hardest one out of the lot so far.

Life

I want to prepare myself for the next chapter in my book of life, and that is to move to somewhere new. I've lived in this area all my life (apart from 3 years living in Edinburgh when I was very young and living in Halls when I lived in Edinburgh for most of the year).

Some people who follow me on here know I want to own a boat and want to live on a boat at some point in my life. Narrowboating has been a dream but actually isn't so much of a dream now but something I could potentially do now. I've got more than £150k invested in my house, and the sale of that could give me a narrowboating lifestyle. I want to prepare for this because I want to start by the time I reach forty (that's still 7 years away, though!).

Job

I enjoy my job and think I'm pretty good at it (certainly my knowledge is!). But my passion is coding, and I feel that in the next couple of years, I need to get a new job back in the industry. My New Year's Resolution is to try and set down the building blocks to try and do this and find a new job. So, I plan to try to make this my last year of teaching (at least at the moment). If it doesn't happen, then I'm still okay with this, and I don't want to put pressure on myself to find that new job and I do like my job at the moment anyway.

If I'm going to be narrowboating, I want to have all this sorted before I move on with that.

ZPE now has ChatGPT integrated. The new ChatGPT object is available within the language and requires you to set up your own API key.

I'm also working on the editor AI features, including AI Suggest, AI Assistant and AI Improve. All of these will connect to my webserver but will require a subscription.

I'm really happy with this being the first feature of 2025.

2024 has been an exciting year. Personally, I believe there have been several key highlights. This is one of those sorts of sentimental look back at the year moments, particularly from my point of view. I mainly make these so I can look back, since a blog is kind of like a journal anyway.

First, in January, I launched my new balf.io platform. balf.io is an external way to view things like my slideshows and documents (DragonSlides and DragonDocs, respectively). It also acts as my URL shortener and redirect system. Overall, balf.io has simplified things that I do on a daily basis. Speaking of DragonDocs, January was the first year my new DragonDocs AI was released, allowing DragonDocs to mark answers provided automatically.

Also, in January, I released ZPE 1.12.1, which brought the critical changes that made room for LAMEX2.

In February, I updated my smart home to be entirely local for the first time since I built it. Nothing relies on the cloud, making it more streamlined and faster.

The Internet turned 35 years old in March 2024 - a historic moment.

ZPE 1.12.2 was also released in February and was one of the most significant updates in a long time, with LAMEX2 being included, offering up to 4 times the performance of the previous versions and having a much lower memory overhead. LAMEX2 became the standard LAME in version 1.12.3.

In May 2024, I released my first fully functioning ZPE transpiler, ZenPy, and I fixed the plugin system within ZPE (and then split ZPE's core so that parts that were not necessary became plugins). June saw the introduction of breakpoints to ZPE.

In July, we had the first election in over 14 years, which did not result in Conservatives and ended up with the Labour Party instead. ZPE finally got namespaces in July. In July, once again, I went to York, this time with my mum and dad. It was nice once again and I made some critical decisions about my life and what I'm planning on doing in the near future whilst on holiday this year.

In October, my oldest/longest friend got married. It was a spectacular day up in Dunkeld (I stayed for three days), and the wedding itself was out of this world. Also, in October, I finally got back into YouTube and want to continue to do it again. I also finally updated my house infrastructure to use 2.5GbE for everything.

In December I got my routine MRI scan of my head and spine and all was clear. To top the year off, I went to my very first gig. I saw Travis in Glasgow (my second favourite band). It was exciting and one of the best events I have ever attended. Once again, I went with my mum and my dad came along as well (he's not a fan of Travis the way I am, or my mother even, so he didn't enjoy it the way we did).

All I have to say now is that let's hope 2025 is a great year, too! Happy New Year when it comes.